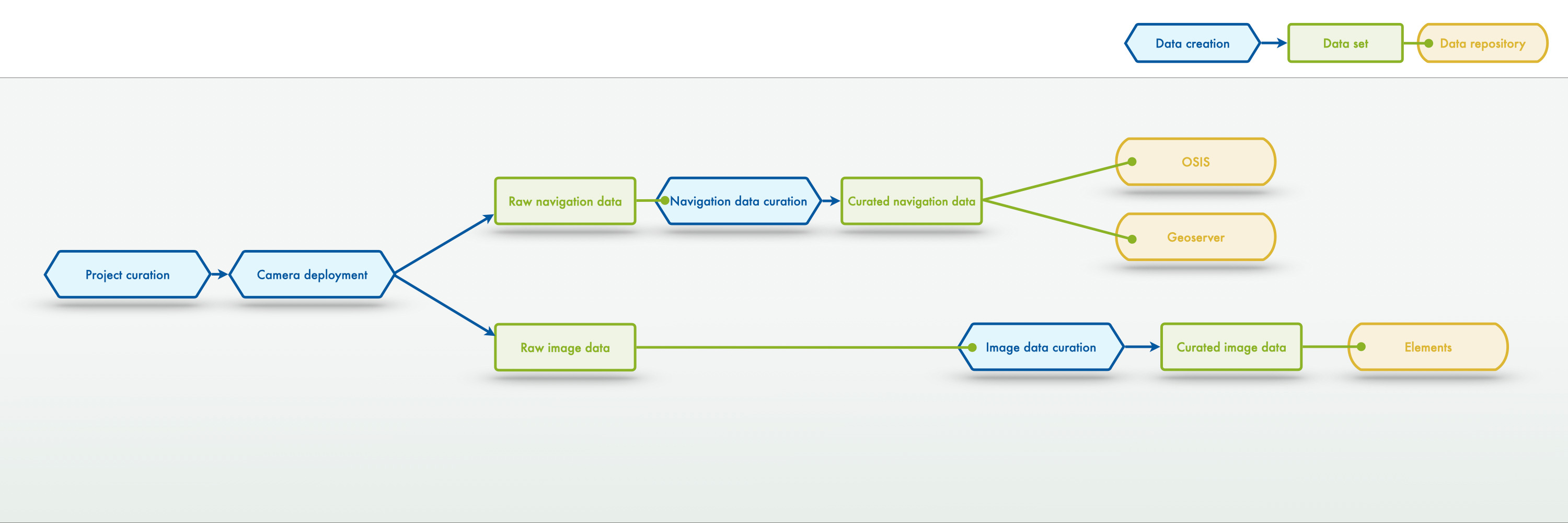

High-level SOP on the general image curation workflow#

The overall image curation workflow from the project planning phase until the data publication in the open repositories#

Image data management - all#

for one single deployment - a close-up of the project management workflow

For image data, the general project data management workflow consists of several high-level processes, data entities and those are managed in multiple infrastructures.

For image data, the general project data management workflow consists of several high-level processes, data entities and those are managed in multiple infrastructures.

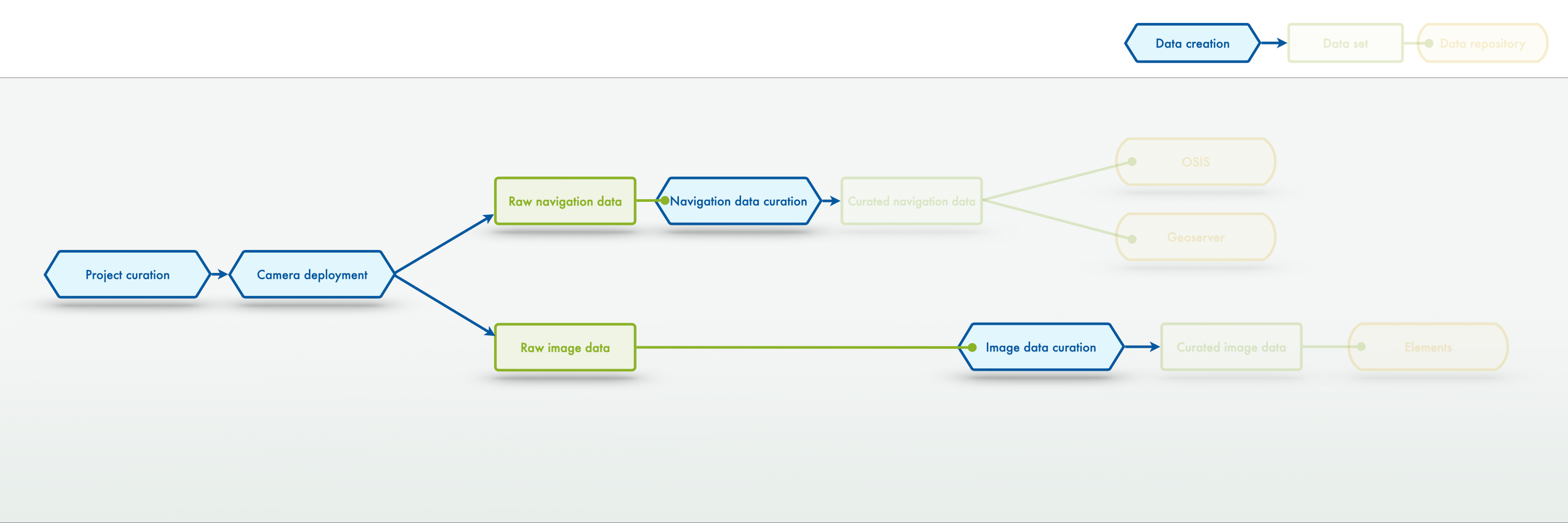

Image data manangement - data creation#

for one single deployment - a close-up of the project management workflow

It is important to note here, that the data creation part of the general workflow includes the curation processes both for the navigation data as well as the image data.

It is important to note here, that the data creation part of the general workflow includes the curation processes both for the navigation data as well as the image data.

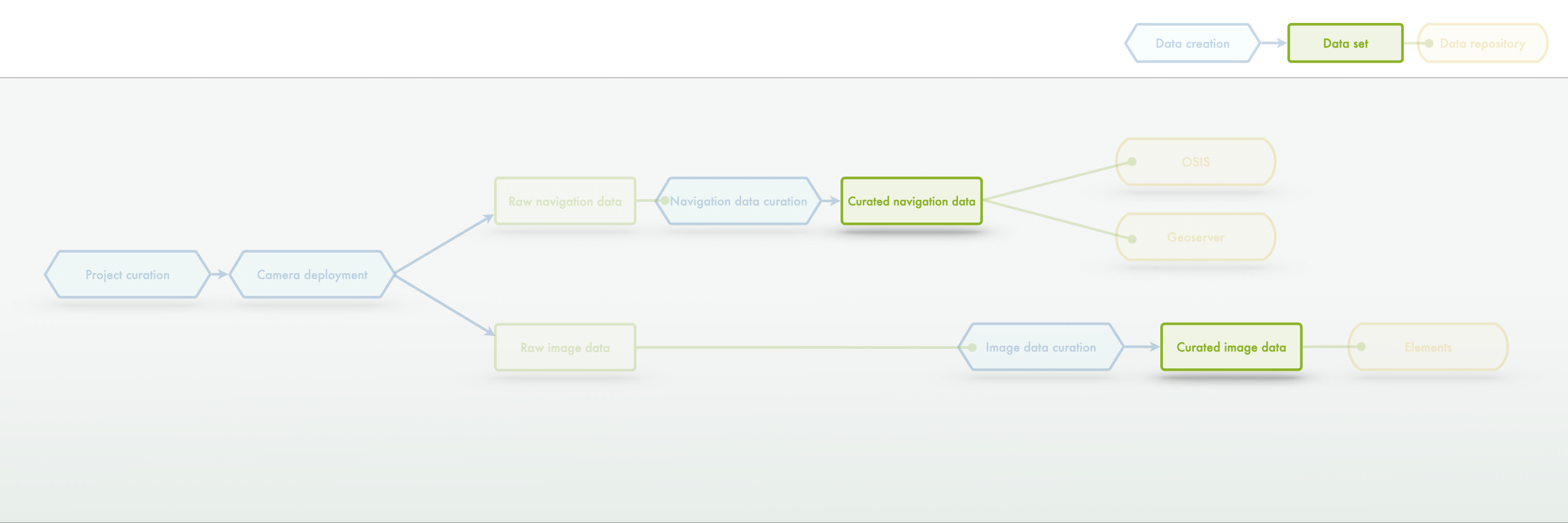

Image data manangement - data sets#

for one single deployment - a close-up of the project management workflow

The central data entities are the curated (i.e. quality-controlled and processed) navigation data and the curated (i.e. quality-controlled and processed) image data.

The central data entities are the curated (i.e. quality-controlled and processed) navigation data and the curated (i.e. quality-controlled and processed) image data.

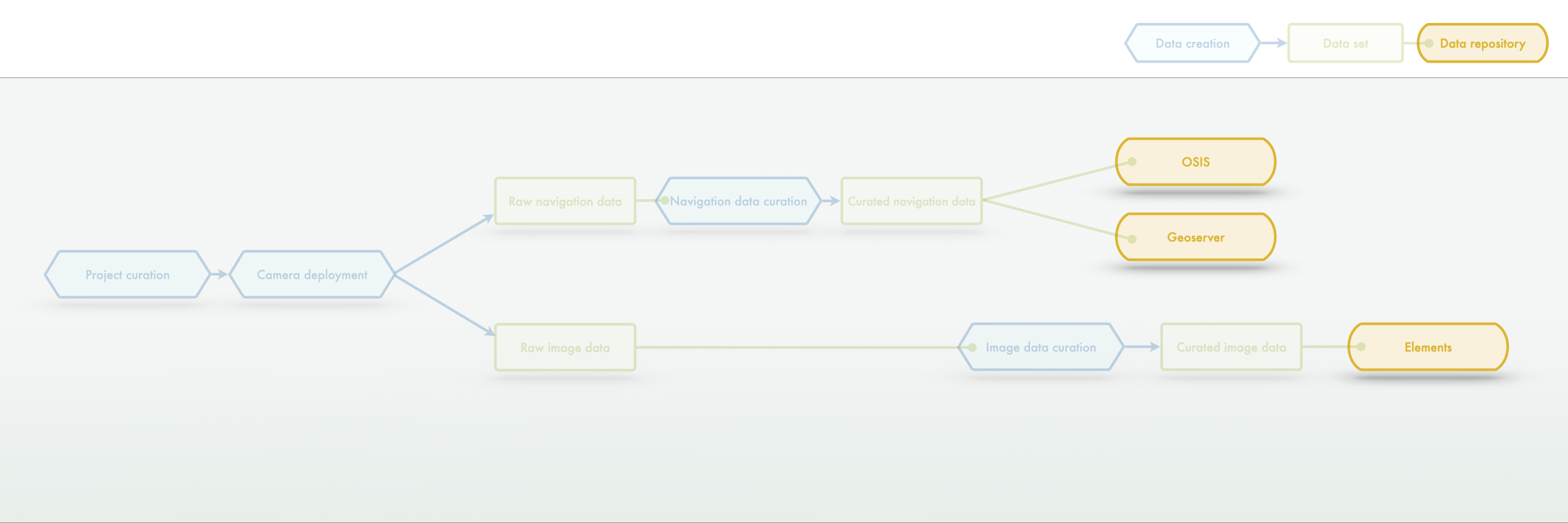

Image data manangement - data repositories#

for one single deployment - a close-up of the project management workflow

Sharing and/or publication of the data entities of the image curation workflow is facilitated in different infrastructures. The Elements media asset management system is contains the work repository of the imagery. Other platforms - more suited for collaborative data exchange - e.g. OSIS (attention: other HGF centers might put their own data repository here!) or a geoserver.

Sharing and/or publication of the data entities of the image curation workflow is facilitated in different infrastructures. The Elements media asset management system is contains the work repository of the imagery. Other platforms - more suited for collaborative data exchange - e.g. OSIS (attention: other HGF centers might put their own data repository here!) or a geoserver.

The following section zooms in even further into the process and looks at specific aspects of the image curation workflow in more detail and with documentation entities.

Details of the image curation process as close-ups of the overall image curation workflow#

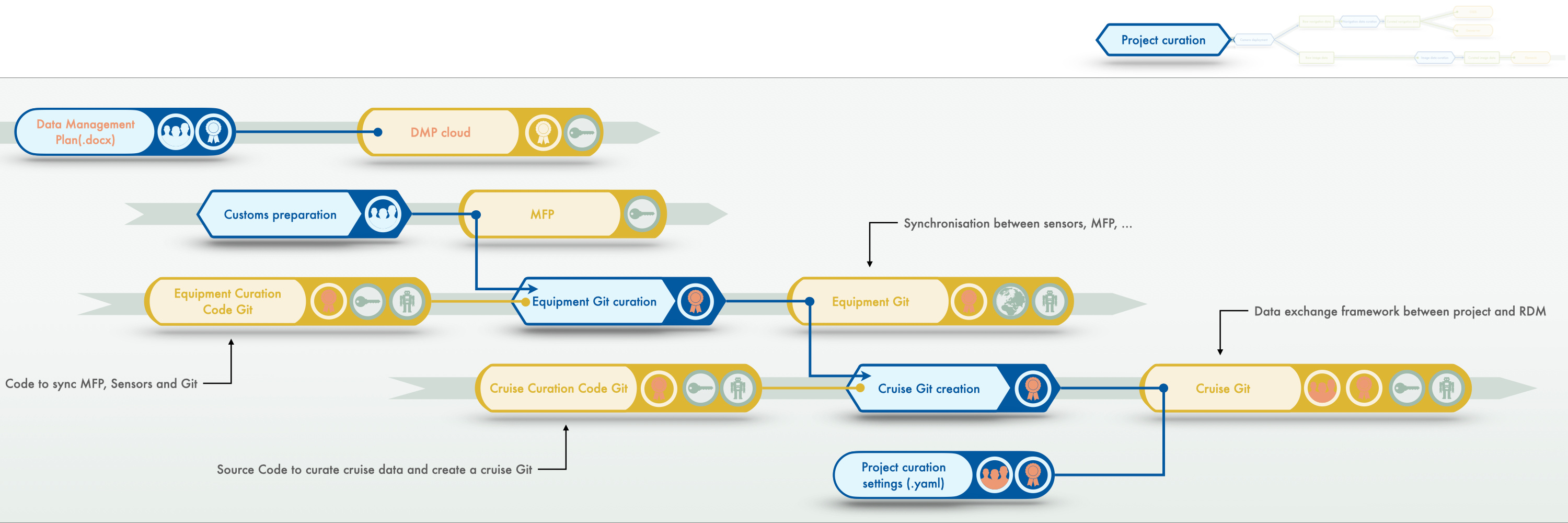

Project curation (step 1/5)#

for one single deployment - a close-up of the image data management workflow

Project curation starts before a project has been applied for!

Project curation starts before a project has been applied for!

- In order to establish the FAIR data creation of any project, a data management plan (DMP) needs to be developed by the researchers and the RDM. This DMP needs to reside in a shared infrastructure (e.g. a cloud folder) which is maintained by the RDM team.

- Equipment that creates image data (or navigation data) needs to be well-defined, ideally in central or shared infrastructure. At GEOMAR this is done in the Marine Facilities Planning (MFP) tool. Equipment information from this source needs to be regurlarly synchronized with other such repositories like AWI sensors, the DSHIP devices, the OSIS devices etc. Synchronization is facilitated at GEOMAR bya Git repository bundling all information from the various sources. This is a background activity conducted by the RDM team and not directly related to the project data management.

- Setting up a shared working space between researchers and the RDM for a specific project. This can come in the form of a Git repository for a project (or cruise). This repository is semi-automatically filled by information available in the DMP and the equipment Git. In the shared project space, one essential documentation entity is a machine-readable file containing a multitude of overarching project information: the project curation setting *.yaml file.

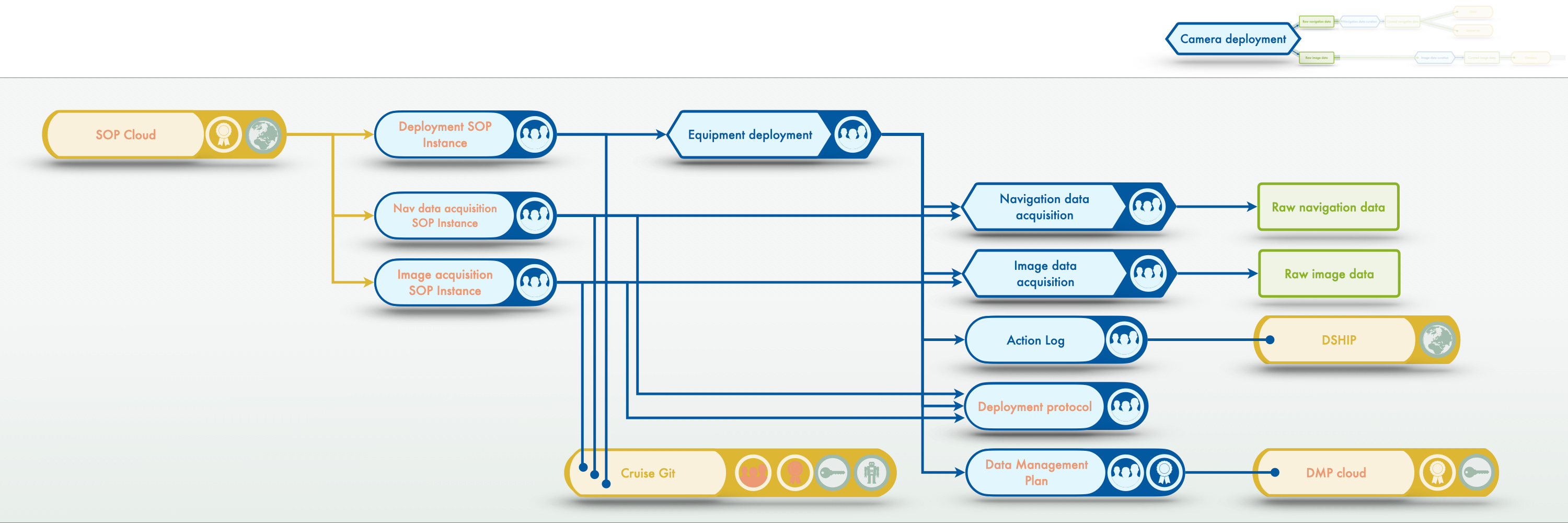

Camera deployment (step 2/5)#

for one single deployment - a close-up of the image data management workflow

Deploying the cameras and acquiring imagery is the sole responsibility of the researchers. Information on these processes needs to become available to the RDM and/or publicly. SOPs on deploying cameras and acquiring imagery need to be created by the researchers and can be placed in a central infromation hub for others to benefit from this knowledge: the SOP cloud. While the SOP cloud contains general SOPs, which can be seen as SOP templates, an equipment deployment requires creating instances of these SOPs which are then populated with information on conducting the SOP for this specific project or deployment. These instances need to be accessible by the researchers and the RDM and contribute required information to subsequent processing steps and documentation entitites. One key entity is the deployment protocol (some see this as the instance of the deployment SOP) for which currently no infrastructure solution exists at GEOMAR. The deployment SOP instances also feed information into the DMP, underlining its existence as a living document.

Deploying the cameras and acquiring imagery is the sole responsibility of the researchers. Information on these processes needs to become available to the RDM and/or publicly. SOPs on deploying cameras and acquiring imagery need to be created by the researchers and can be placed in a central infromation hub for others to benefit from this knowledge: the SOP cloud. While the SOP cloud contains general SOPs, which can be seen as SOP templates, an equipment deployment requires creating instances of these SOPs which are then populated with information on conducting the SOP for this specific project or deployment. These instances need to be accessible by the researchers and the RDM and contribute required information to subsequent processing steps and documentation entitites. One key entity is the deployment protocol (some see this as the instance of the deployment SOP) for which currently no infrastructure solution exists at GEOMAR. The deployment SOP instances also feed information into the DMP, underlining its existence as a living document.

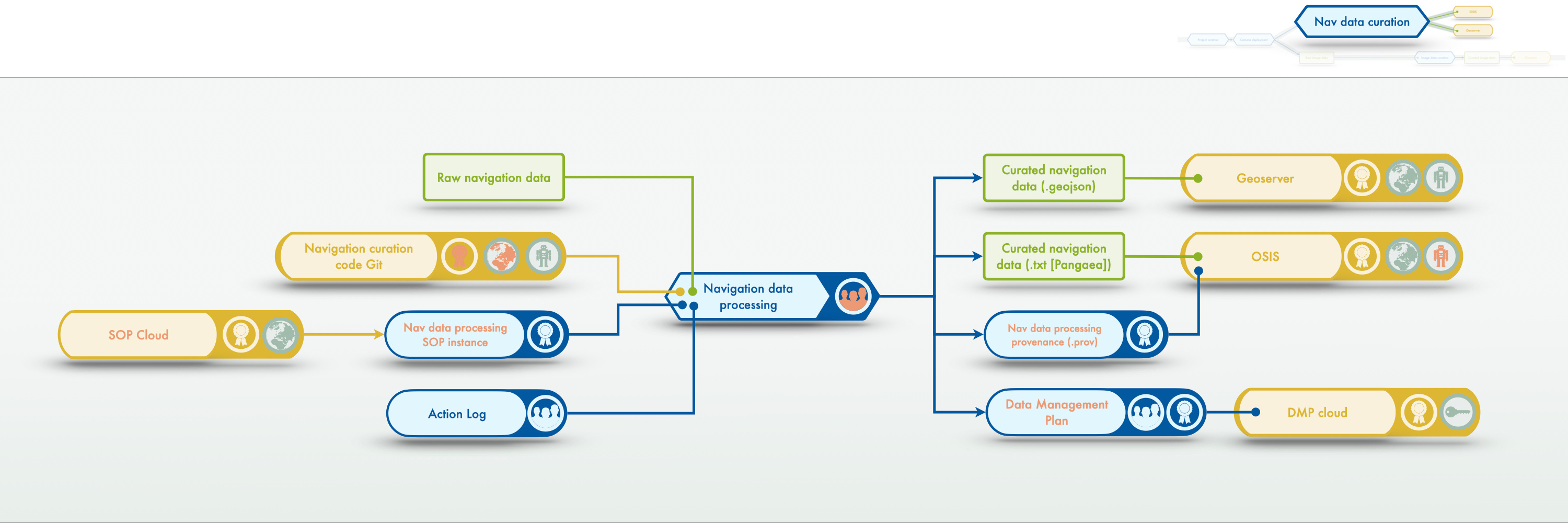

Navigation data curation (step 3/5)#

for one single deployment - a close-up of the image data management workflow

Processing the navigation data should be done according to an SOP for this task. The processing itself can be done with python code provided by the MareHub AG. This processing requires input from the action log.

Outputs of the navigation data processing are data files (.txt and .geojson) for the data infrastructures and a file documenting the provenance of the processing steps. This provenance file needs to be publicly available to make the navigation data FAIR. Again, the processing has an effect on the DMP which needs to be updated accordingly.

Processing the navigation data should be done according to an SOP for this task. The processing itself can be done with python code provided by the MareHub AG. This processing requires input from the action log.

Outputs of the navigation data processing are data files (.txt and .geojson) for the data infrastructures and a file documenting the provenance of the processing steps. This provenance file needs to be publicly available to make the navigation data FAIR. Again, the processing has an effect on the DMP which needs to be updated accordingly.

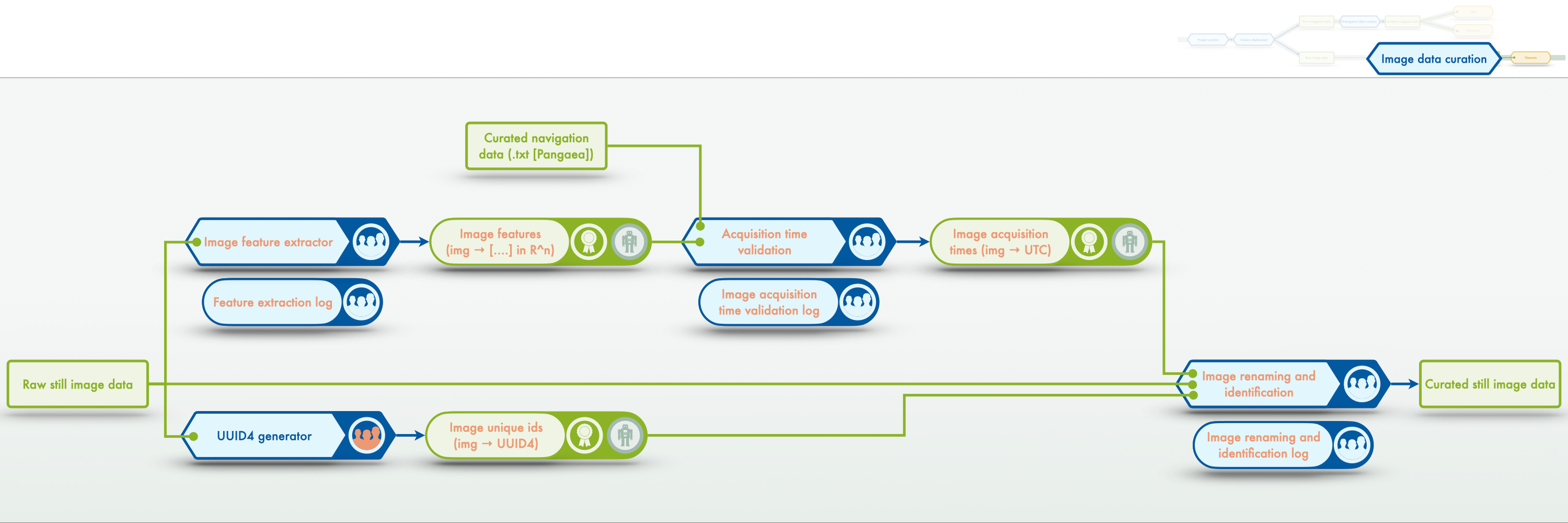

Image data curation (step 4/5)#

for one single deployment - a close-up of the image data management workflow

Curation of the image data is split in two parts here. The goal of the process is to create an iFDO (image FAIR digital objects) and pFDO (proxy FAIR digital object) of the image data set. The two key components for that are i) making sure that the image acquisition time is correct (to link the image data with other and meta data) and ii) to assign a unique id (UUID4 - random) to each image. Once the acquisition time has been checked and a UUID incorporated into the image header the files can be renamed on disk, resulting in the curated image data set.

Curation of the image data is split in two parts here. The goal of the process is to create an iFDO (image FAIR digital objects) and pFDO (proxy FAIR digital object) of the image data set. The two key components for that are i) making sure that the image acquisition time is correct (to link the image data with other and meta data) and ii) to assign a unique id (UUID4 - random) to each image. Once the acquisition time has been checked and a UUID incorporated into the image header the files can be renamed on disk, resulting in the curated image data set.

Image data curation (step 5/5)#

for one single deployment - a close-up of the image data management workflow

Once the curated image data is ready, two additional processes can create the remaining required information for the iFDO and pFDO: i) scale determination provides the resolution of the images (i.e. the size of one pixel in SI units) and ii) file hashing provides a value to check the file integrity later on. All the data and process documentation entities can now be bundled into the iFDO and pFDO. During this process provenance information on the processing itself is recorded and published alongside the image metadata. iFDOs enable FAIRness of images and have a use of their own (thus should be shared through OSIS) as well as facilitate using the image data (thus should be shared through Elements).

Once the curated image data is ready, two additional processes can create the remaining required information for the iFDO and pFDO: i) scale determination provides the resolution of the images (i.e. the size of one pixel in SI units) and ii) file hashing provides a value to check the file integrity later on. All the data and process documentation entities can now be bundled into the iFDO and pFDO. During this process provenance information on the processing itself is recorded and published alongside the image metadata. iFDOs enable FAIRness of images and have a use of their own (thus should be shared through OSIS) as well as facilitate using the image data (thus should be shared through Elements).